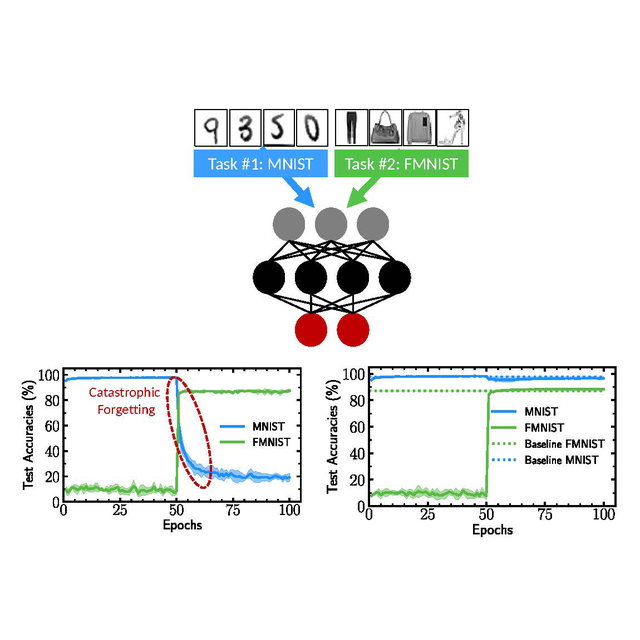

For several years now, artificial intelligence has made considerable progress thanks to the implementation of deep learning: computer programs called deep artificial neural networks are now capable of learning very complex tasks. However, these programs suffer from a considerable limitation: they are subject to “catastrophic forgetting.” When an artificial neural network has been trained to perform a task, using that same network to learn a new task largely erases what has been learned previously. Our brain does not have this problem: we are able to learn new tasks continuously.

C2N researchers have just discovered a solution to this problem, by using “binarized” neural networks, a type of neural network developed since 2016. These neural networks, in which most of the variables are binary, are studied intensely to create circuits dedicated to artificial intelligence with low energy consumption. C2N researchers have just proven that there is an unexpected link between binarized artificial neural networks and neuroscientific models of synaptic “metaplasticity”, and that this link can be used to considerably reduce the problem of catastrophic forgetting. While synaptic plasticity is the brain's ability to alter the connections between its neurons, metaplasticity is its ability to alter its level of plasticity.

The researchers used this new technique in various tasks where an artificial neural network continuously learns new information. Unlike other approaches proposed in the literature to reduce catastrophic forgetting, the researchers' approach, like our brains, does not require a formal separation of the tasks to be remembered. This work illustrates the great interest of making links between research in neuroscience and artificial intelligence.

This work also resonates with the new magnetic nano-components developed at C2N because they could intrinsically implement this metaplastic behavior directly thanks to the rich physics of the components. The next work of C2N will therefore consist in demonstrating metaplastic systems using nano-components.

Reference

Nature Communications volume 12, Article number: 2549 (2021)

Axel Laborieux, Maxence Ernoult, Tifenn Hirtzlin & Damien Querlioz

DOI https://doi.org/10.1038/s41467-021-22768-y

Figure caption

Neural networks implemented in artificial intelligence (top) are subject to catastrophic forgetting. If they are taught to recognize numbers (MNIST) and then clothes (FMNIST), these networks lose the ability to recognize numbers (bottom, left). Thanks to the metaplastic approach proposed by C2N researchers, these networks can successively learn the two tasks (bottom, right).

Contact : Damien Querlioz